The recent hype surrounding Swiss researchers and their "skill transfer" system is a classic case of academic theater. We are being told that a breakthrough in cross-platform robotics will finally allow a humanoid to learn from a robotic arm or a quadrupel to mimic a bi-ped. It sounds efficient. It sounds like the "GPT moment" for hardware.

It is actually a distraction from the physics of reality.

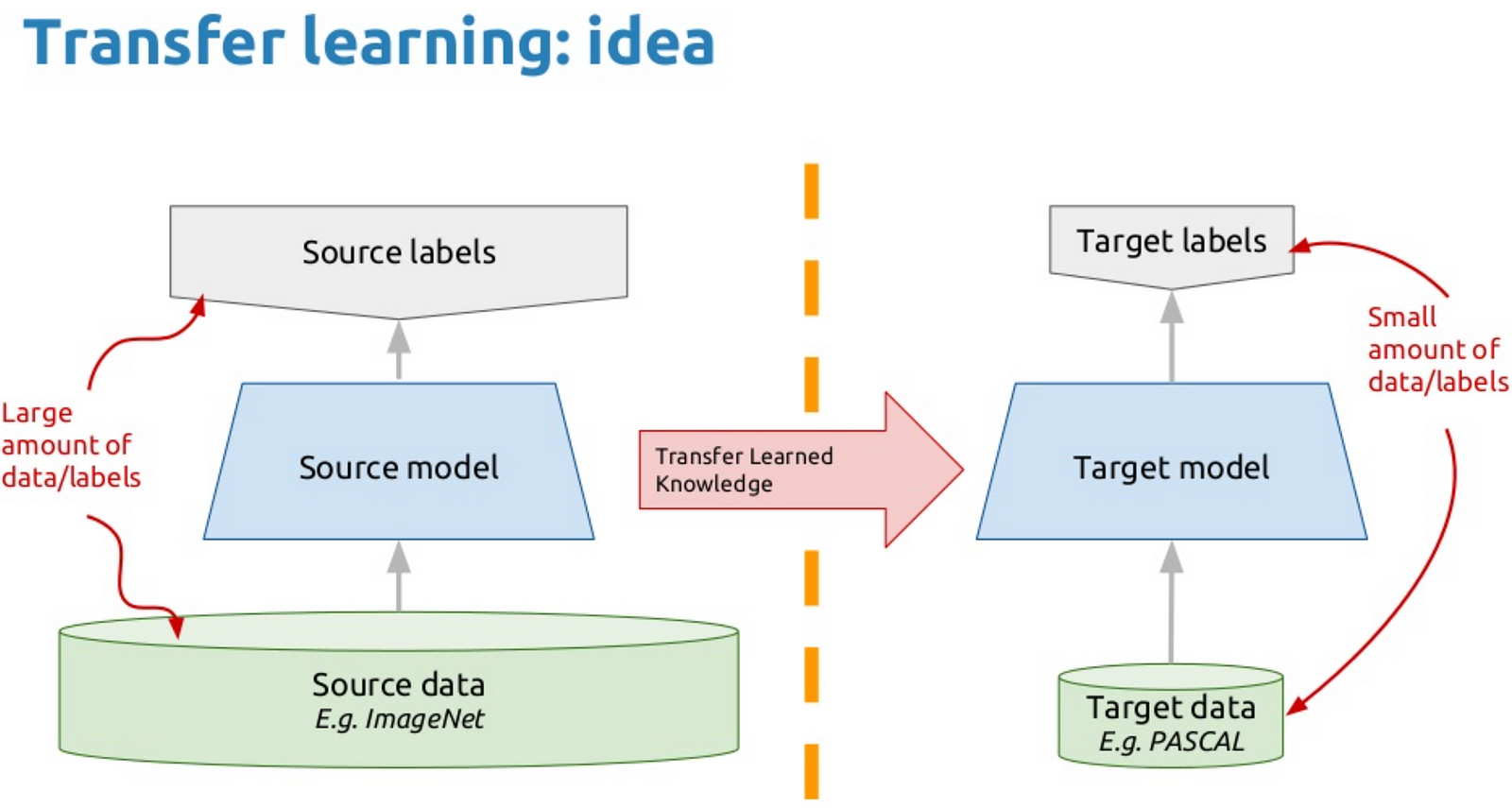

Industry insiders and lab-bound researchers are currently obsessed with the idea that data is fungible. They believe that if you train a neural network to understand "grasping" on a $100,000 industrial Kuka arm, you can somehow distill that essence and inject it into a $5,000 hobbyist bot.

I have spent a decade watching startups burn through Series A funding trying to "abstract away" the hardware. It never works. Why? Because in robotics, the hardware is the intelligence. When you try to decouple a skill from the specific motors, gear ratios, and sensor latency of the original machine, you aren't transferring a skill. You are transferring a hallucination.

The Morphology Trap

The fundamental error in the "Skill Transfer" narrative is the denial of embodiment. In AI circles, we talk about "embodied AI" as if the body is just a shell for the code. This is backwards.

The constraints of a robot's physical form dictate the possible solutions for any given task. This is known in biomechanics as morphological computation. If a robot has high-friction joints, its "skill" for walking will inherently include compensations for that friction. If you transfer that "walking" model to a low-friction, high-torque system, the robot won't just walk poorly—it will likely shake itself into a mechanical failure.

Researchers are attempting to use "Action Mapping" or "Latent Space Alignment" to bridge this gap. They take the vector space of one robot's movements and try to find a mathematical transformation that fits another's.

It’s a beautiful math problem. It’s a terrible engineering solution.

Why Generalization is a Mirage

The "Lazy Consensus" in robotics right now is that we need a "General Foundation Model" for motion. The logic goes: "We did it for text with LLMs, so we can do it for motors."

This ignores the massive difference between the digital world of tokens and the physical world of stochasticity. In a text model, the word "apple" is always the same sequence of bits. In the physical world, the "skill" of picking up an apple changes based on:

- The temperature of the hydraulic fluid.

- The wear and tear on the rubber grippers.

- The 15-millisecond jitter in the local Wi-Fi network.

When the Swiss team—or any team—claims they can transfer skills across different robots, they are usually operating in highly controlled environments with massive safety margins. They slow the robots down. They use soft-touch sensors. They remove the "noise" that actually defines real-world operation.

Real-world robotics isn't about "understanding" a task; it's about managing the error. A transferred skill is essentially "pre-packaged error" from a different system. You are starting your optimization from a point of failure.

The Cost of the "Shortcut"

I’ve seen companies spend eighteen months trying to "fine-tune" a transferred model from a university lab, only to realize they could have trained a bespoke model from scratch in three weeks using simulation and Reinforcement Learning (RL).

We are obsessed with the "shortcut" of transfer learning because we are afraid of the data bottleneck. We don't have enough real-world video of every robot doing every task. So, we try to cheat.

But this cheat comes with a hidden tax: The Black Box of Incompatibility.

When a bespoke model fails, you can trace it back to the reward function or the sensor input. When a "transferred" model fails, you have no idea if the failure is due to the new environment or a vestigial "memory" of the old robot's torque curves. You end up debugging a ghost.

The "People Also Ask" Delusion

If you look at what the public is asking about this technology, the questions are fundamentally flawed because they assume robots work like human brains.

"Can a robot learn to cook by watching a human?"

No. A human has 27 bones in their hand and a nervous system with zero latency. A robot has three metal fingers and a 60Hz control loop. Watching a human is useless because the robot cannot physically replicate the human’s "policy."

"Will skill transfer make robots cheaper?"

The opposite. Skill transfer requires massive compute to "translate" actions between platforms. It also requires high-end sensors to ensure the "translated" action isn't about to snap a limb. The cheapest, most efficient robots will always be those running hyper-specialized code designed for their specific hardware.

Simulation is the Only Transfer That Matters

If we want to talk about "Transfer," let's talk about the only version that actually works: Sim-to-Real.

Instead of transferring skills from Robot A to Robot B, we should be perfecting the transfer from Physics Engine X to Robot B.

In a simulation, we can randomize the physics. We can make the floor slippery, then sticky, then tilted. We can make the robot's arm heavy, then light. This creates a "Robust Policy"—a model that doesn't rely on the specifics of its body because it has been forced to succeed across a thousand variations of that body.

This isn't "transferring a skill." This is "training for uncertainty."

The Swiss research is an impressive academic exercise in mapping high-dimensional spaces. But for the person trying to deploy a fleet of robots in a warehouse or a hospital, it’s a siren song.

The Hard Truth About Robotic Intelligence

We want robots to be like us. We want to teach them a "concept" and have them apply it everywhere. But a robot is not a mind inhabiting a body; a robot is the body.

Every time you see a headline about "Seamless Skill Transfer," replace it with "Researchers Force Square Peg into Round Hole with Expensive Math."

The future of robotics isn't in sharing skills between different machines. It's in the radical specialization of hardware and the brutal efficiency of localized learning. If you want a robot to do a job, stop trying to give it a "brain" that was trained for someone else’s arms.

Stop looking for the universal translator. Start respecting the physics of the machine you actually built.

Build the bot. Train the bot. Forget the transfer.